AMD ROCm 7 Enables CUDA-Free LLM Fine-Tuning

AMD ROCm 7 allows CUDA-free LLM fine-tuning on MI325X hardware. Learn how this breakthrough eliminates custom kernels and challenges NVIDIA's AI dominance.

Articles in

AMD ROCm 7 allows CUDA-free LLM fine-tuning on MI325X hardware. Learn how this breakthrough eliminates custom kernels and challenges NVIDIA's AI dominance.

SEA-LION v4 adopts Alibaba Qwen3, shifting Southeast Asian AI infrastructure from US models to Chinese LLMs optimized for local languages.

Avoid the scaling trap. Discover why open-source AI is the smarter, cost-effective choice for solo devs and startups compared to closed-source APIs.

Discover why Thai enterprises must adopt self-hosted LLMs to ensure PDPA compliance, control costs, and maintain data sovereignty against foreign API risks.

Learn to fine-tune LLMs on 24GB GPUs using QLoRA. A step-by-step guide to adapting 7B-33B models with PEFT, Unsloth, and consumer hardware.

Learn how to build a private AI server on Windows using Ollama and Open WebUI. Secure your data with a fully local LLM setup today.

Discover why the hybrid AI strategy wins in 2026. Compare open-source LLMs like Llama 4 and proprietary models like GPT-5 for cost and reasoning.

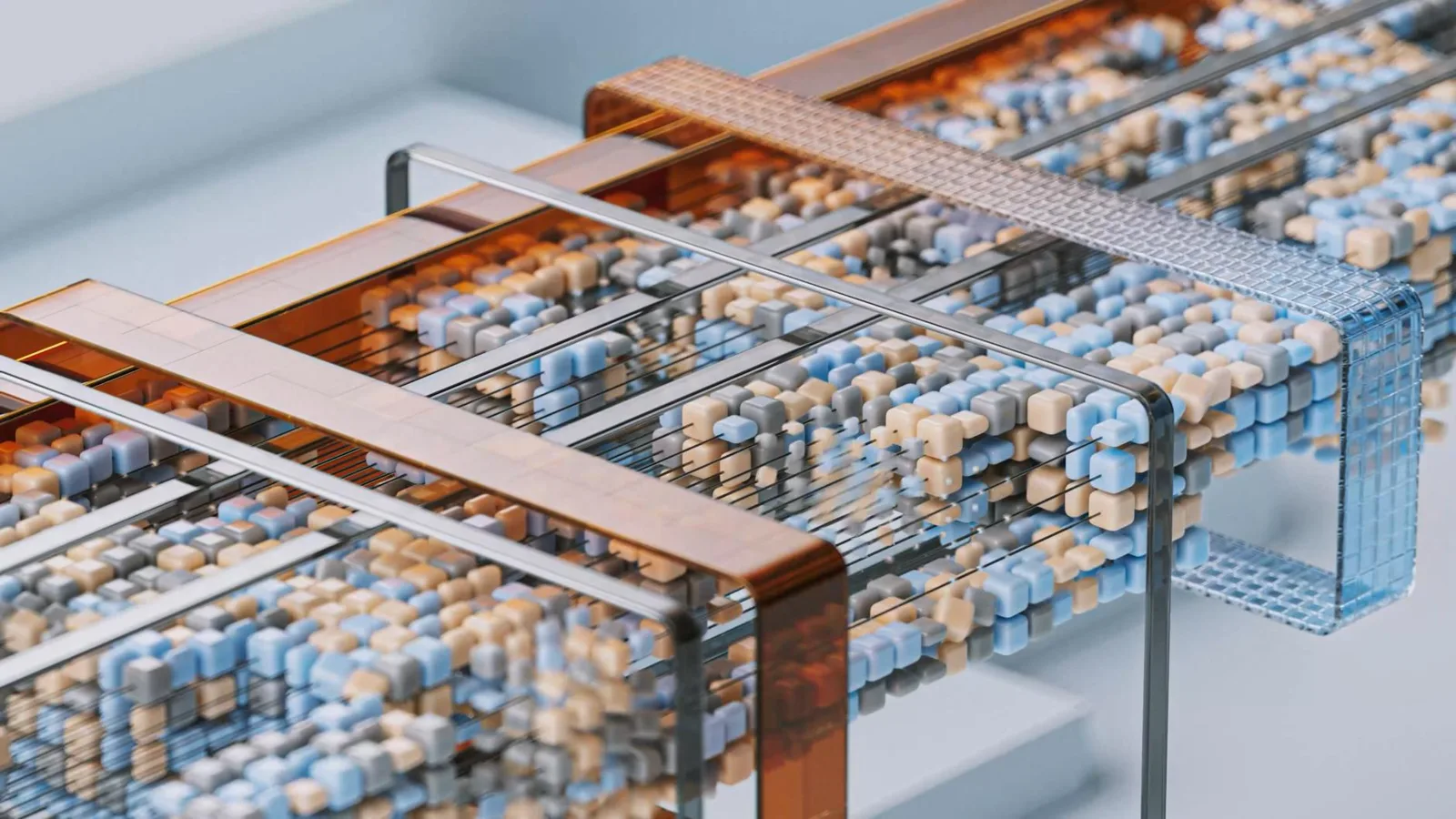

Discover why Mixture-of-Experts (MoE) replaced dense models in 2026. Learn how MoE architectures boost LLM efficiency and slash inference costs.

An in-depth look at Qwen 3.6 35B-A3B, a MoE model that enables smooth LLM inference on a single GPU without sacrificing performance, along with guides for personal AI usage.

32B–80B models now run on a single GPU with quality approaching early GPT-4. Here's what it means for how we'll actually use AI.