Gemma 4 VLA on Jetson Orin Nano: Memory Limits

Explore Gemma 4 VLA deployment on Jetson Orin Nano Super. Discover the gap between demo success and CUDA out-of-memory errors developers face on edge AI.

TL;DR: Google’s Gemma 4 VLA model runs autonomously on the NVIDIA Jetson Orin Nano Super (8 GB RAM) using Parakeet STT and Kokoro TTS, yet developers face CUDA out-of-memory errors when the system requires a 1,024 MiB free memory buffer that standard configurations cannot provide.

Key facts

- The Gemma 4 VLA demo on Jetson Orin Nano Super uses Parakeet for speech-to-text and Kokoro for text-to-speech.

- The Jetson Orin Nano Super hardware configuration includes 8 GB of total memory.

- Developers reported CUDA out-of-memory errors on April 4, 2026, when attempting to run the Gemma 4 e4b model.

- The system projects a device memory usage of 5,533 MiB for the Gemma 4 e4b model.

- Deployment fails because the system cannot meet a critical free memory target of 1,024 MiB despite having 7,619 MiB total VRAM.

- The successful autonomous demo was published on Hugging Face on April 22, 2026.

- The Gemma 4 e4b model runs in CPU-only mode but fails when GPU acceleration is enabled on this hardware.

Edge AI Deployment: A Tale of Two Experiences

On April 22, 2026, a demonstration of Google’s Gemma 4 Vision-Language-Action (VLA) model running on the NVIDIA Jetson Orin Nano Super highlighted the growing capability of multimodal AI on edge hardware [1, 3]. The demo, detailed on Hugging Face, features a sophisticated pipeline where user speech is processed via Parakeet STT, interpreted by Gemma 4, and responded to via Kokoro TTS [3]. Crucially, the model autonomously decides to utilize a webcam for visual context when necessary, operating without hardcoded logic or keyword triggers [3].

However, this successful demonstration stands in stark contrast to the practical challenges faced by the broader developer community. Earlier in April 2026, multiple users reported significant deployment failures on the same hardware, citing CUDA out-of-memory errors when attempting to run the Gemma 4 e4b model [4, 5]. This divergence underscores the tension between the theoretical promise of affordable edge AI and the stringent memory constraints developers encounter in practice.

The Successful Demo: Autonomous Multimodal Interaction

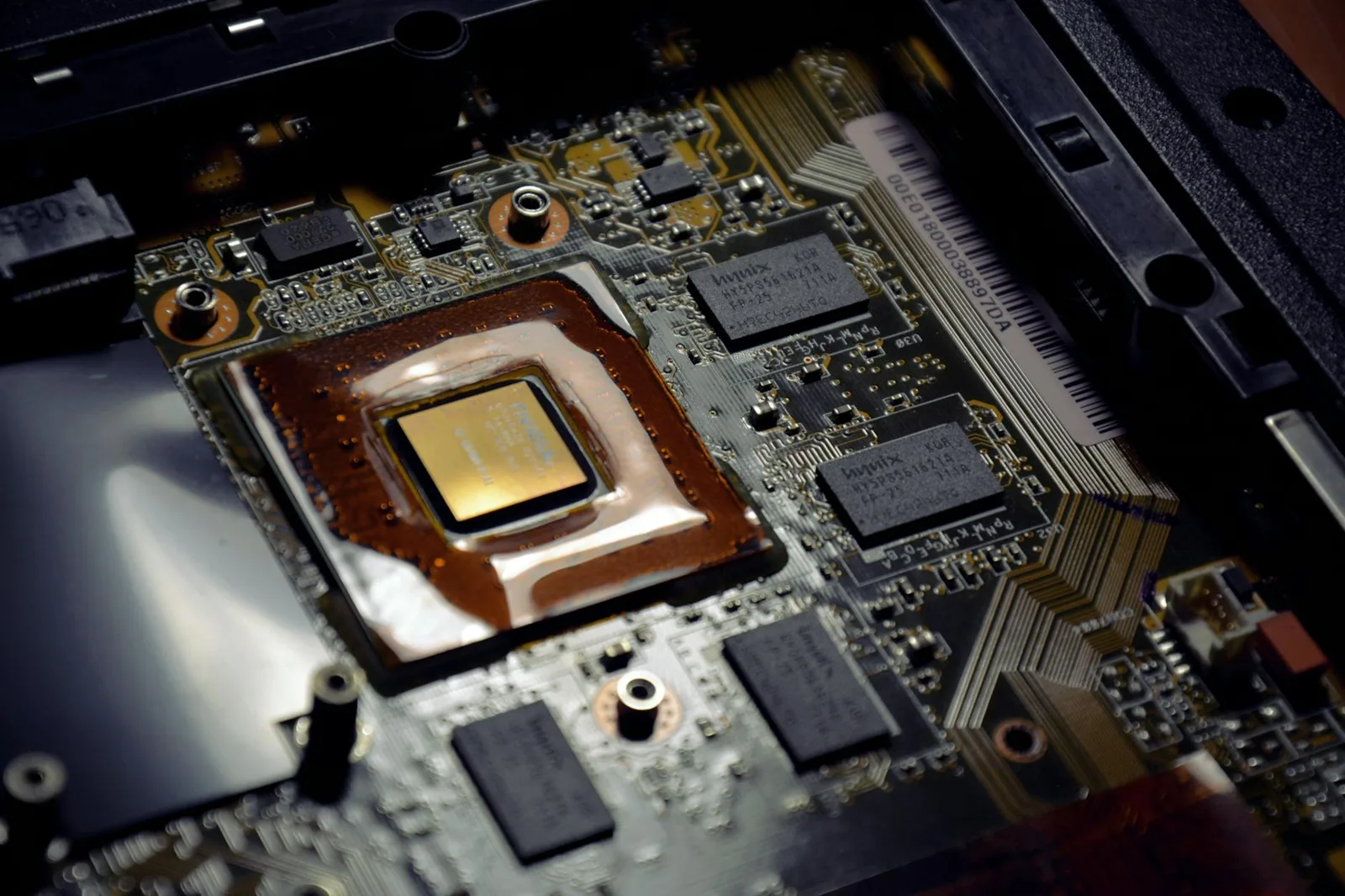

The demonstration, published on April 22, 2026, illustrates a fully integrated voice and vision assistant running on a compact form factor [1, 3]. The hardware setup consisted of an NVIDIA Jetson Orin Nano Super with 8 GB of memory, a Logitech C920 webcam, and standard USB peripherals [3]. The system’s architecture relies on a seamless interaction between three key components: Parakeet for speech-to-text conversion, the Gemma 4 model for reasoning and decision-making, and Kokoro for text-to-speech synthesis [3].

What distinguishes this implementation is its autonomy. Rather than relying on fixed commands, the model evaluates the user’s query and determines whether visual input is required [3]. If the question pertains to the physical environment, the system activates the webcam to capture and interpret the scene [3]. This capability allows the device to answer questions based on real-time visual input, such as analyzing a photo or processing a document, without pre-defined triggers [3].

The full script for this tutorial is available on GitHub in the asierarranz/Google_Gemma repository, providing a blueprint for developers interested in replicating the setup [3]. The successful execution on the Jetson Orin Nano Super suggests that optimized configurations can enable advanced AI capabilities on small, affordable chips, potentially reducing reliance on expensive cloud servers for warehouse and retail applications [1, 3].

The Developer Reality: Memory Constraints and CUDA Errors

Despite the polished demo, actual deployment has proven difficult for many users. On April 4, 2026, a discussion on the NVIDIA developer forums titled “No luck with Gemma 4 on Jetson Nano Super” revealed widespread issues [4]. Users attempting to run the Gemma 4 e4b model via Docker and llama.cpp encountered persistent CUDA out-of-memory errors [4, 5].

Error logs from these attempts indicate that the Jetson Orin Nano Super has a total VRAM of 7,619 MiB [4, 5]. The system projected a device memory usage of 5,533 MiB for the model, which theoretically should fit within the available memory [4, 5]. However, the deployment failed because the system could not meet a critical free memory target of 1,024 MiB [4, 5]. This suggests that while the model itself might fit, the overhead of the runtime environment, drivers, or other system processes leaves insufficient headroom for stable operation.

One user noted that the model worked in CPU-only mode but failed when the GPU was enabled, highlighting the complexity of optimizing memory allocation across different hardware components [5]. These findings imply that the successful demo likely utilized highly specific optimizations or configuration tweaks not immediately obvious from the standard deployment instructions [4, 5].

Implications for Edge AI Adoption

The juxtaposition of the successful demo and the reported failures offers a nuanced view of the current state of edge AI. On one hand, the ability to run a multimodal VLA model on a device as small and affordable as the Jetson Orin Nano Super represents a significant milestone [1, 3]. It demonstrates that complex reasoning and vision tasks are no longer the exclusive domain of large data centers [1, 3].

On the other hand, the memory constraints reported by developers serve as a cautionary tale [4, 5]. As models grow in size and complexity, the available memory on edge devices becomes a critical bottleneck [4, 5]. The 8 GB memory configuration of the Jetson Orin Nano Super, while sufficient for the optimized demo, may not be adequate for all use cases or model variants without careful management [3, 5].

For developers looking to deploy similar systems, the experience suggests that success will depend on rigorous optimization and a deep understanding of the hardware’s memory architecture [4, 5]. The availability of resources like the GitHub repository for the demo provides a starting point, but replicating the results may require additional troubleshooting and configuration adjustments [3].

Conclusion

The Gemma 4 VLA demo on the Jetson Orin Nano Super showcases the potential for autonomous, multimodal AI on edge hardware [1, 3]. However, the concurrent reports of CUDA out-of-memory errors highlight the practical challenges of deployment [4, 5]. As the industry moves towards more integrated AI solutions, bridging the gap between theoretical capability and practical implementation will be essential for widespread adoption [1, 3]. The experience serves as a reminder that while the hardware is becoming more powerful, the software optimizations required to fully leverage it remain a significant hurdle [4, 5].

The ongoing discussions in developer forums and the availability of open-source implementations suggest a collaborative effort to overcome these challenges [4, 5]. As tools and techniques evolve, the vision of intelligent, autonomous edge devices may become more accessible to a broader range of applications and users [1, 3].

Sources

- Gemma 4 VLA Demo on Jetson Orin Nano Super (huggingface.co) — 2026-04-22

- No luck with Gemma 4 on Jetson Nano Super (forums.developer.nvidia.com) — 2026-04-04

- Gemma4 e4b on Jetson Orin Nano fails due to CUDA out of memory issue (forums.developer.nvidia.com) — 2026-04-05