Gemini Robotics ER 1.6: Embodied Reasoning & Safety

Google DeepMind releases Gemini Robotics ER 1.6, enhancing embodied reasoning with instrument reading and safety compliance for industrial robots.

TL;DR: Google DeepMind released Gemini Robotics-ER 1.6 on April 14, 2026, a vision-language model that enhances embodied reasoning for real-world robotics. The update introduces instrument reading capabilities developed with Boston Dynamics and improves spatial logic and safety compliance. While it enables robots to perform complex industrial tasks, demonstrations reveal ongoing challenges in physical d.

Key facts

- Google DeepMind released Gemini Robotics-ER 1.6 on April 14, 2026, as an upgrade to the reasoning-first model [1][7].

- The model specializes in visual and spatial understanding, task planning, and success detection [1][7].

- A new capability, instrument reading, allows robots to read complex gauges and sight glasses [1][7].

- The instrument reading capability was discovered through collaboration with Boston Dynamics [1][7].

- Gemini Robotics-ER 1.6 is described as the safest robotics model in the ER line to date [2][4].

- The model can respect physical constraints, such as not lifting objects over 20kg [4].

- The model is available to developers via the Gemini API and Google AI Studio [1][7].

Introduction

Google DeepMind has officially released Gemini Robotics-ER 1.6, a significant upgrade to its reasoning-first model designed to bridge the gap between artificial intelligence and physical action. Announced on April 14, 2026, this vision-language model represents a strategic shift from simple command-following to systems capable of interpreting complex physical environments, planning multi-step actions, and accurately detecting task completion [1][7]. The release marks a pivotal moment for embodied AI, as it introduces capabilities that are critical for industrial adoption, particularly in environments where precision and safety are paramount.

Enhanced Embodied Reasoning and Spatial Logic

At its core, Gemini Robotics-ER 1.6 is built to understand the world as robots perceive it. The model excels in visual and spatial understanding, allowing it to navigate cluttered environments and reason about objects in three-dimensional space [1][7]. A key improvement in this version is enhanced spatial pointing, which enables robots to detect, count, and reason about objects with greater accuracy [4][6]. This capability is essential for tasks that require precise manipulation and spatial awareness, such as picking specific items from a disorganized pile or navigating through narrow industrial corridors.

The model also features advanced success detection mechanisms. By utilizing multiple camera views, Gemini Robotics-ER 1.6 can determine whether a task has been completed successfully, even in visually complex or cluttered settings [4][6]. This multi-view understanding reduces the likelihood of false positives and ensures that robots can autonomously verify the outcome of their actions, a crucial step towards reliable autonomy in real-world applications.

Instrument Reading: A Breakthrough for Industrial Robotics

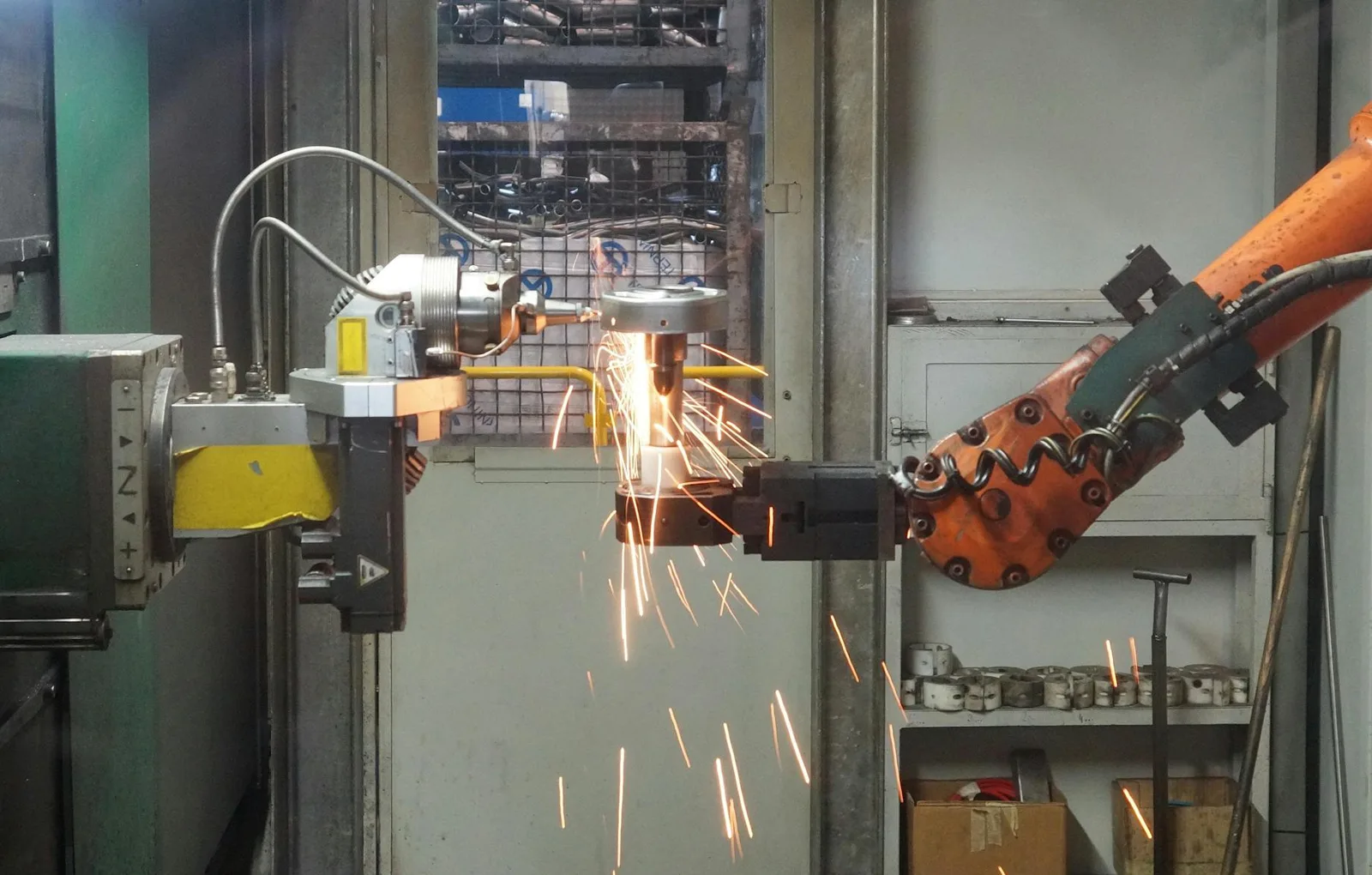

One of the most notable additions in Gemini Robotics-ER 1.6 is the ability to read complex instruments, such as gauges and sight glasses [1][7]. This capability was not developed in isolation but was discovered through a close collaboration with Boston Dynamics, highlighting the practical challenges and insights gained from deploying AI in industrial settings [1][7]. By enabling robots to interpret physical instruments, the model can perform critical inspection tasks that were previously the exclusive domain of human operators.

The integration of this capability was demonstrated using Boston Dynamics’ Spot robot, which was equipped with Gemini Robotics-ER 1.6 to conduct industrial inspections [2][8]. In these demonstrations, Spot was able to read handwritten to-do lists and identify potential hazards, such as pooled water, showcasing the model’s ability to process both structured and unstructured visual information [8]. This level of perception allows robots to adapt to dynamic environments and make informed decisions based on real-time visual data.

Safety and Physical Constraints

Safety is a critical concern in robotics, and Google DeepMind has emphasized that Gemini Robotics-ER 1.6 is the safest model in the ER line to date [2][4]. The model demonstrates superior compliance with safety policies, including the ability to proactively avoid objects and respect physical constraints [2][4]. For instance, the model can be programmed to recognize and adhere to weight limits, such as not lifting objects over 20kg, thereby preventing potential damage to the robot or its environment [4].

This focus on safety is particularly important as robots are increasingly deployed in shared spaces with humans. By ensuring that robots can understand and respect physical boundaries and safety protocols, Gemini Robotics-ER 1.6 helps mitigate risks and builds trust in autonomous systems. The model’s ability to reason about safety constraints in real-time is a significant step towards the widespread adoption of robots in sensitive environments such as manufacturing plants, warehouses, and healthcare facilities.

Execution Gaps and Physical Challenges

Despite its advanced reasoning capabilities, the release of Gemini Robotics-ER 1.6 also highlights the ongoing challenges in physical execution. During demonstrations, the model’s integration with Spot revealed instances where the robot struggled with dexterity, such as gripping a can sideways [8]. These execution gaps underscore the complexity of translating high-level reasoning into precise physical actions, a challenge that remains at the forefront of robotics research.

The disparity between cognitive reasoning and physical execution is a well-known issue in embodied AI. While Gemini Robotics-ER 1.6 excels at understanding and planning, the actual manipulation of objects requires a level of fine motor control and adaptability that is still being refined. These limitations serve as a reminder that while AI models are becoming increasingly sophisticated, the physical world remains inherently unpredictable and challenging to navigate.

Developer Access and Integration

Google DeepMind has made Gemini Robotics-ER 1.6 available to developers through the Gemini API and Google AI Studio, facilitating broader experimentation and integration into existing robotics frameworks [1][7]. Developers can easily upgrade from version 1.5 to 1.6 by simply changing the model name in the API call, ensuring a seamless transition for those already working with the ER line [5].

The model functions as a high-level orchestrator, capable of calling external tools such as Google Search and integrating with third-party vision-language-action models [3][7]. This flexibility allows developers to build more complex and capable robotic systems by combining the reasoning strengths of Gemini Robotics-ER 1.6 with specialized tools and algorithms. The ability to interact with external resources enhances the model’s utility, enabling robots to access up-to-date information and leverage additional computational resources when needed.

Industry Impact and Future Outlook

The release of Gemini Robotics-ER 1.6 has been met with enthusiasm from the robotics community, with many noting its potential to accelerate the adoption of AI in industrial settings [2][4]. Marco da Silva, VP and GM of Spot at Boston Dynamics, stated that the model marks an important step toward robots operating in the physical world, emphasizing the collaborative efforts between AI research and practical robotics applications [8].

As the technology continues to evolve, the focus will likely shift towards addressing the remaining challenges in physical execution and enhancing the model’s ability to adapt to unforeseen circumstances. The collaboration between Google DeepMind and Boston Dynamics serves as a model for future partnerships, where theoretical advancements in AI are closely aligned with the practical needs of industrial robotics.

In conclusion, Gemini Robotics-ER 1.6 represents a significant milestone in the development of embodied AI. By combining enhanced reasoning, safety compliance, and practical capabilities like instrument reading, the model brings us closer to a future where robots can autonomously perform complex tasks in real-world environments. While challenges remain, the progress made with this release underscores the potential of AI to transform industries and improve efficiency in ways previously thought impossible.

Sources

- Gemini Robotics ER-1.6 enhances reasoning to help robots navigate real-world tasks. (blog.google) — 2026-04-14

- Gemini Robotics ER 1.6: Enhanced Embodied Reasoning (deepmind.google) — 2026-04-14

- Google DeepMind Releases Gemini Robotics-ER 1.6 with Embodied Reasoning | Marc Theermann posted on the topic | LinkedIn (www.linkedin.com) — 2026-04-27

- Google’s new AI helps robots understand and act in real world (interestingengineering.com) — 2026-04-14

- Gemini Robotics ER 1.6: Enhanced Embodied Reasoning | Tom Lue (www.linkedin.com) — 2026-04-17

- Spot robot gets Gemini AI to boost real-world inspection tasks (interestingengineering.com) — 2026-04-15

- Gemini Robotics-ER 1.6 | Gemini API | Google AI for Developers (ai.google.dev) — 2026-05-01

- Gemini Robotics-ER 1.6 (deepmind.google) — 2000-01-01