moe llm ai-architecture

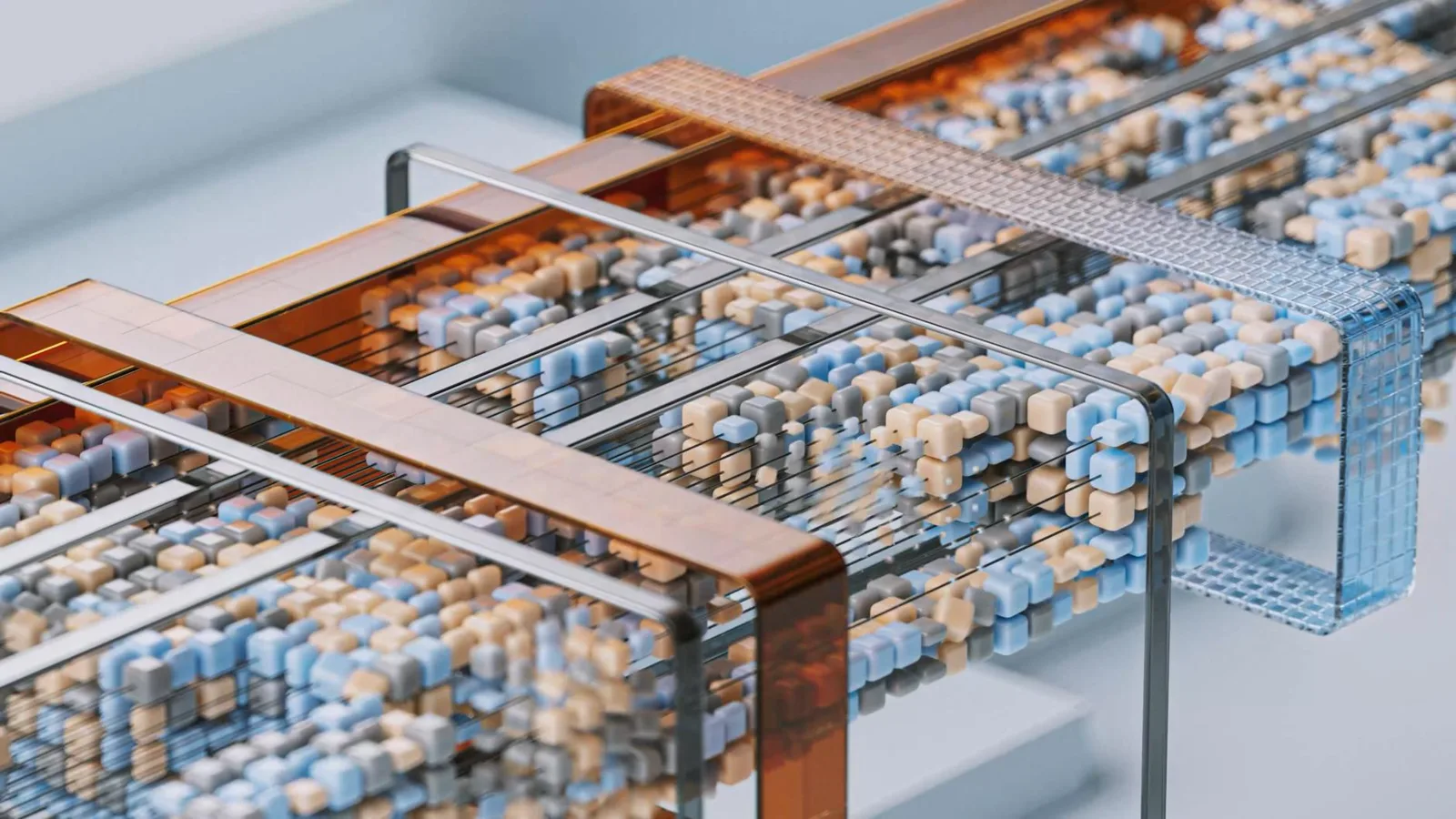

Mixture-of-Experts (MoE): Why 2026 LLMs Chose Efficiency

Discover why Mixture-of-Experts (MoE) replaced dense models in 2026. Learn how MoE architectures boost LLM efficiency and slash inference costs.

· 5 min read

Articles in